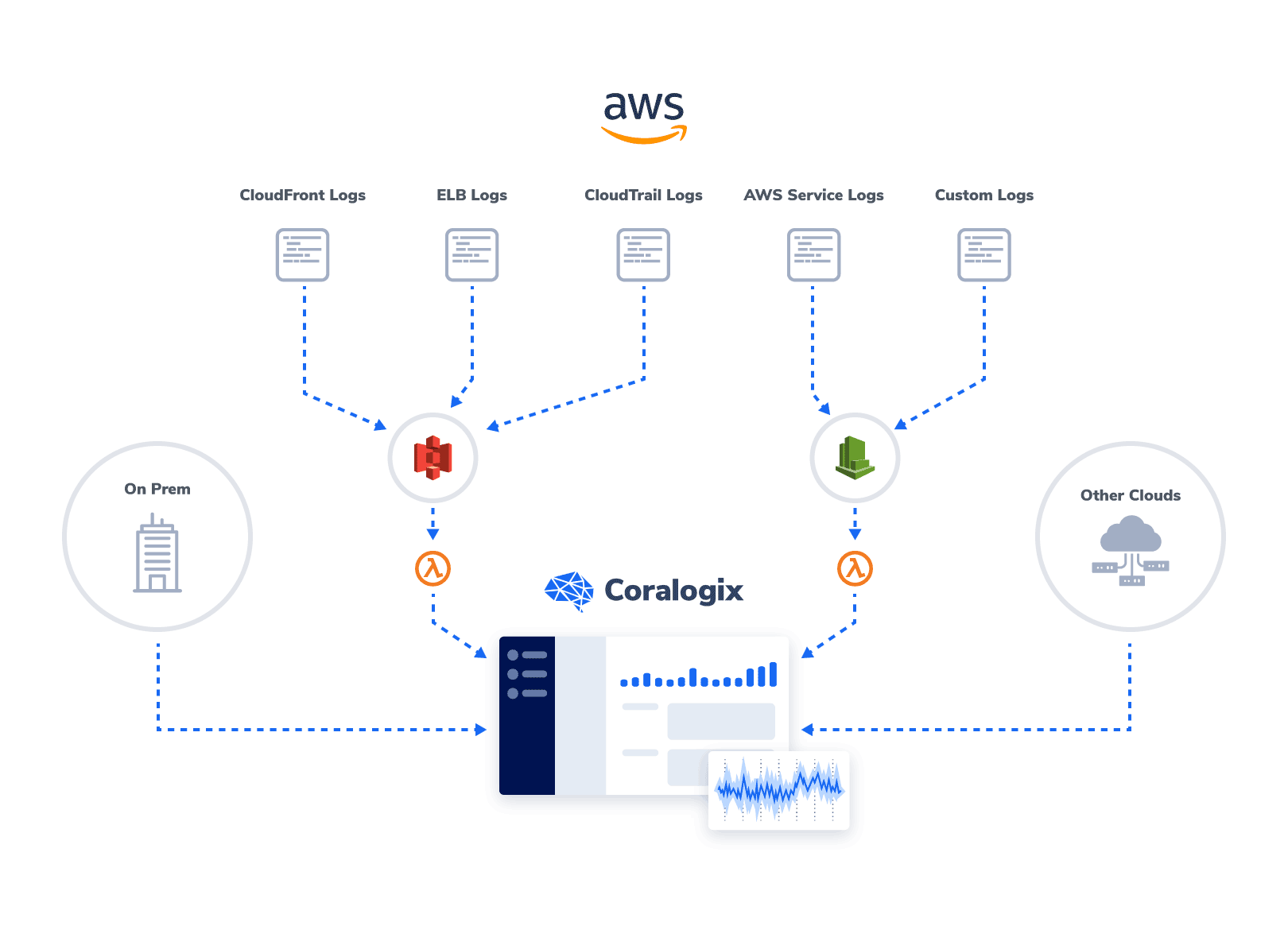

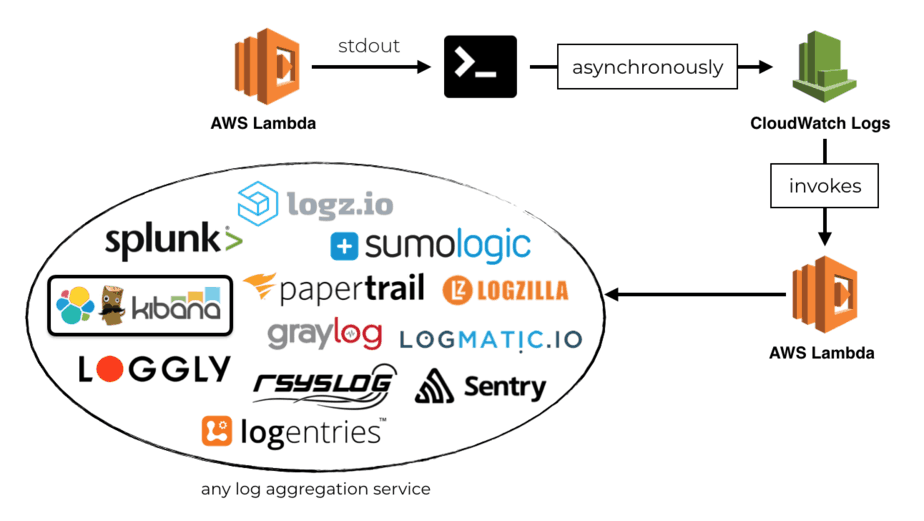

If those are the advantages of Athena, what are the drawbacks of the other ‘immediate suspects’ we might choose for log analysis? Cost reduction: Athena’s serverless architecture means we can leverage inexpensive storage on S3 rather than costly database storage.Joins and enrichments: Exporting the logs from CloudWatch enables us to perform various transformations on the data.SQL access: Athena allows our analyst to query the data using the ANSI SQL she already knows and uses in a variety of other contexts.However, Athena offers several advantages: Why Amazon Athena for CloudWatch Logs?Īmazon Web Services offers several tools and databases that could be relevant for the use case we described: Redshift, ElasticSearch, CloudWatch itself and others. After some data has accumulated, an IT analyst wants to explore the data using SQL in order to uncover deeper insights and trends that have emerged over time. Example Business ScenarioĪ company’s IT department is using CloudWatch to monitor infrastructure and troubleshoot issues. In this article we’ll present a reference architecture and key principles for storing your logs in analytics-ready format on Amazon S3, and then using Amazon Athena to query and analyze the data. While CloudWatch enables you to view logs and understand some basic metrics, it’s often necessary to perform additional operations on the data such as aggregations, cleansing and SQL querying, which are not supported by CloudWatch out of the box. You may want to configure CloudWatch logs to dump them into S3 after a period of time.Amazon CloudWatch is a monitoring service for AWS cloud resources and the applications you run on AWS. Storing log files in CloudWatch Logs may be more expensive, subject to the amount of logging and how long you plan to retain the logging for.

ebextensions file that configures CloudWatch to stream your custom application logging because it’s not included by default, in my opinion, it should be. AWS Elastic Beanstalk will not automatically attach the required CloudWatch policy to your EC2 instance role - even if you choose the option to stream it’ll not work until you learn how to add this policy.You can attach a Log Filter that can cause an alarm to fire off notifications, such as emails, for example, if a number of Exceptions are seen within a short period of time, too many login attempts, etc.You can search by terms and/or date/time ranges.The logs are searchable directly within the AWS Console (within the CloudWatch section).Within the Elastic Beanstalk configuration, you can specify a retention period (e.g.Any terminated EC2 instances would have already transferred the logs in their live stream to CloudWatch - no more gaps in logging.The logs are streamed from each EC2 instance to one centralised Log Group.Thus, you’ll have to create a custom lifecycle rule specific to the S3 prefix (aka path). By default there’s no S3 Policy to delete these files so they’ll just keep growing - there’s no configuration in the AWS console to set retention, unlike the CloudWatch log configuration.If the logs never rotated before you terminated your instance then you will have a gap in your logging (unless the Beanstalk sends a signal to the EC2 instance to tidy up before termination, but I haven’t tested this).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed